“Didn’t you say you had it under control?” Discover why smart security teams choose GravityZone — before the chaos hits. Learn More >>

Hacking is a term most often used for the act of gaining access to computer systems or networks without approval from the system owner. Over time, it evolved, and today hacking can also be ethical, which involves obtaining approval before entry.

In real environments, illicit access can be achieved in various ways, from using stolen credentials or credentials obtained through phishing to running publicly available exploit code against exposed services or relying on tools and infrastructure built by other attackers. In some situations, access is gained with little more than timing and basic awareness of what is exposed. At the other end of the spectrum are attacks that demand deep familiarity with operating systems, applications, and browsers, where control is achieved only after several small weaknesses are combined.

This kind of work has become visible enough to support competitive hacking events, which consistently show how layered flaws can undermine systems that appear well defended. What these, as well as other advanced intrusion campaigns, reveal regularly is that small flaws, when combined, can be enough to compromise otherwise well-defended systems. The pressure behind this is largely financial. Recent reporting puts the average cost of a data breach in the 4 to 5 million dollar range, and large organizations are rarely hit just once but are tested repeatedly over time.

The same techniques are sometimes used with permission in controlled environments to identify weaknesses before they are abused. Outside of those contexts, they are used to gain access that was never intended to be granted.

The word “hacking” did not originally carry the negative meaning it has today. In early computing communities, particularly in academic and engineering environments, a "hack" referred to an inventive or unconventional solution to a technical problem. It was more about experimentation and almost nothing related to intrusion, with a strong focus on understanding how systems behaved under unusual conditions. As systems became connected and access controls took shape, those same techniques started to carry different implications when they were used without agreement. Over time, hacking became associated not just with technical ingenuity, but with the act of crossing boundaries that were deliberately put in place.

This distinction matters because hacking itself is a skill, not an outcome. The same technical abilities used to understand how systems function can be applied in authorized or unauthorized contexts. What separates legitimate activity from criminal behavior is not the level of expertise involved, but whether access is permitted and for what purpose it is obtained.

Several terms recur throughout discussions of hacking. “Unauthorized access” refers to interaction with systems or data without explicit permission. “Vulnerability exploitation” is taking advantage of weaknesses that allow such access to occur. “Systems penetration” is a broader concept of crossing security controls to reach protected resources. These terms describe conditions and actions, not motivations, and help clarify how hacking is discussed in practice.

Hacking usually begins with observation. Attackers look for places where systems respond in unexpected ways, where software behaves differently in practice than it was designed to on paper. Those small inconsistencies are then explored, reused, and combined until they provide some level of access or influence. Rarely does this happen through a single exploit or decisive action. This usually happens gradually. Small details add up over time, based on what systems expose and what tends to go unchecked. Attackers don't start with a full picture. They figure things out step by step by watching how systems react to minor, low-risk actions.

Recent large breaches followed this pattern: they developed inside ordinary access paths that were already present, where visibility was low, and trust was assumed rather than verified. What mattered was not ingenuity, but persistence and opportunity.

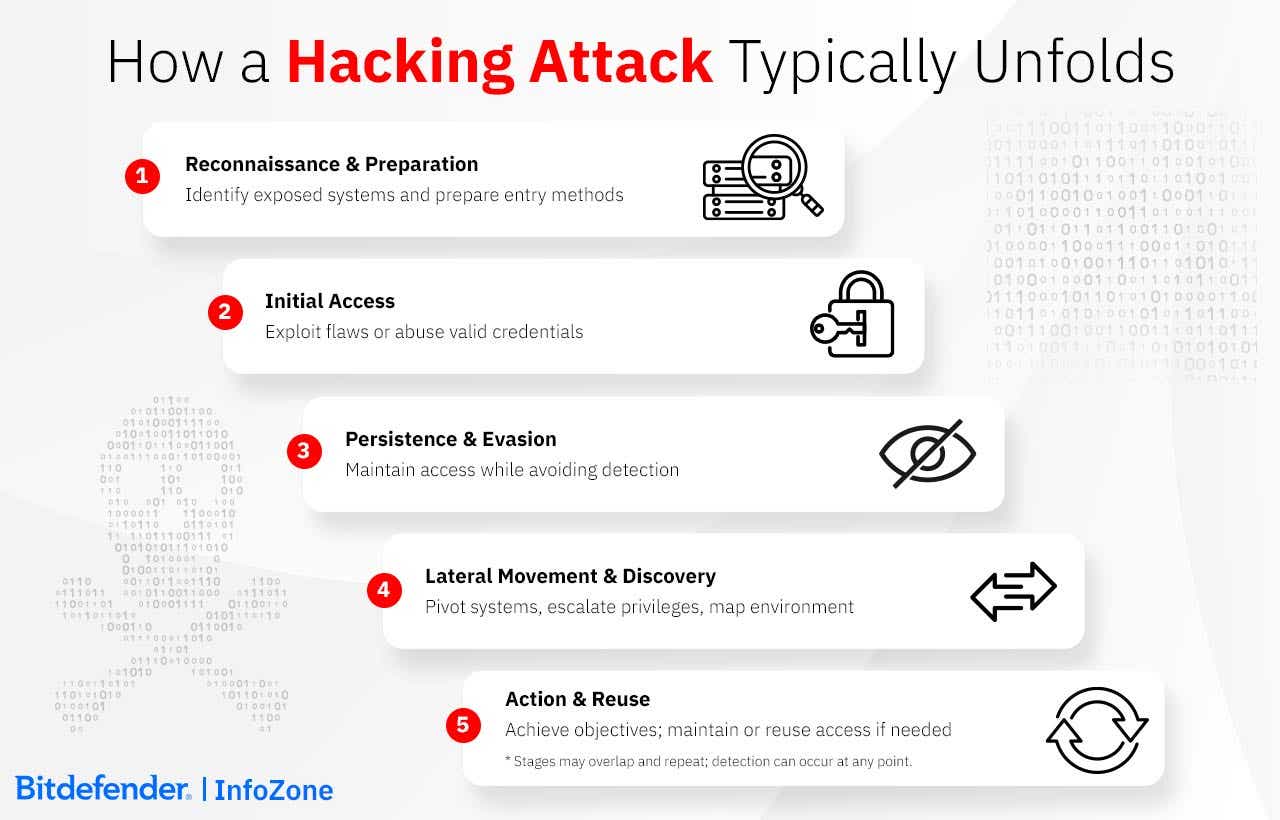

A clean script to follow in hacking is hard to find, being a creative endeavour that tends to move through recognizable stages that blur together as conditions change. Phases are not always linear and, in fact, they frequently overlap/repeat based on context.

Reconnaissance. Before attempting any form of entry, seeing how far normal access already goes is often the first step. This may include identifying exposed services, reachable interfaces, or patterns in how users and systems authenticate. In enterprise environments, this information often emerges indirectly through misconfigurations, forgotten assets, or inherited trust between systems.

Initial Access & Expansion. Initial access is only one point in a longer process. Once access exists, the emphasis is usually on not losing it and seeing where it already leads. Inside a network, attackers rarely build new roads. Once access is present, it behaves less like an intrusion and more like a leak. It follows whatever channels already exist and keeps flowing as long as nothing blocks it.

Persistence & Concealment. Over time, activity concentrates around what continues to work, not because it's optimal, but because it remains unnoticed. Attackers reuse whatever continues to work, like an account that remains valid, a service that responds, or a connection that is monitored infrequently. Once access is reliable, maintaining it quietly is often more valuable than expanding it, since new actions require effort, create noise, and raise the likelihood of being noticed.

|

Attack |

Techniques |

How |

|

Pretexting, phishing, spear phishing, impersonation, business email compromise, etc. |

Work by fitting into normal communication patterns. Messages look routine/reasonable, and people respond before stopping to question context or intent. |

|

|

Technical Exploitation |

SQL injection, ransomware, brute-force and password attacks, credential stuffing, trojans, zero-day exploits, living-off-the-land techniques, and abuse of built-in administrative tools |

Rely on unpatched software, weak authentication, and input handling that was never designed to face hostile use. |

|

Web and Network-Based |

DNS spoofing, unsecured Wi-Fi, man-in-the-middle attacks, session hijacking, lateral movement, denial-of-service attacks |

Target how systems connect and trust each other, often without touching endpoints directly. Traffic is redirected, overwhelmed, or quietly observed rather than overt. |

Discussions about the future of hacking often focus on novelty. What tends to matter more, looking at recent years, is how existing techniques adapt to new environments and operate with less resistance.

Automation and AI are increasingly used to scan for weaknesses at scale. At the same time, cloud platforms and supply-chain dependencies introduce layers of shared access and inherited trust. Deepfake impersonation and attacks on operational technology are part of this landscape, operating alongside more established techniques rather than replacing them. One area that is still taking shape involves large language models. Prompt injection, data leakage through AI tooling, and misuse of embedded assistants don't look like traditional attacks, but they introduce new ways to influence systems indirectly.

By contrast, ideas that rely on capabilities not yet widely available, such as quantum-enabled attacks, remain largely theoretical. The more consistent influence comes from how interconnected systems expose and depend on one another.

The word “hacker” has entered the modern social psyche as an enduring image, usually of a person in a hoodie sitting in a dark room and writing complicated code on a computer with multiple screens. Reality is not as cinematic, and the label covers people who operate under very different conditions and for very different reasons, while often using similar technical skills.

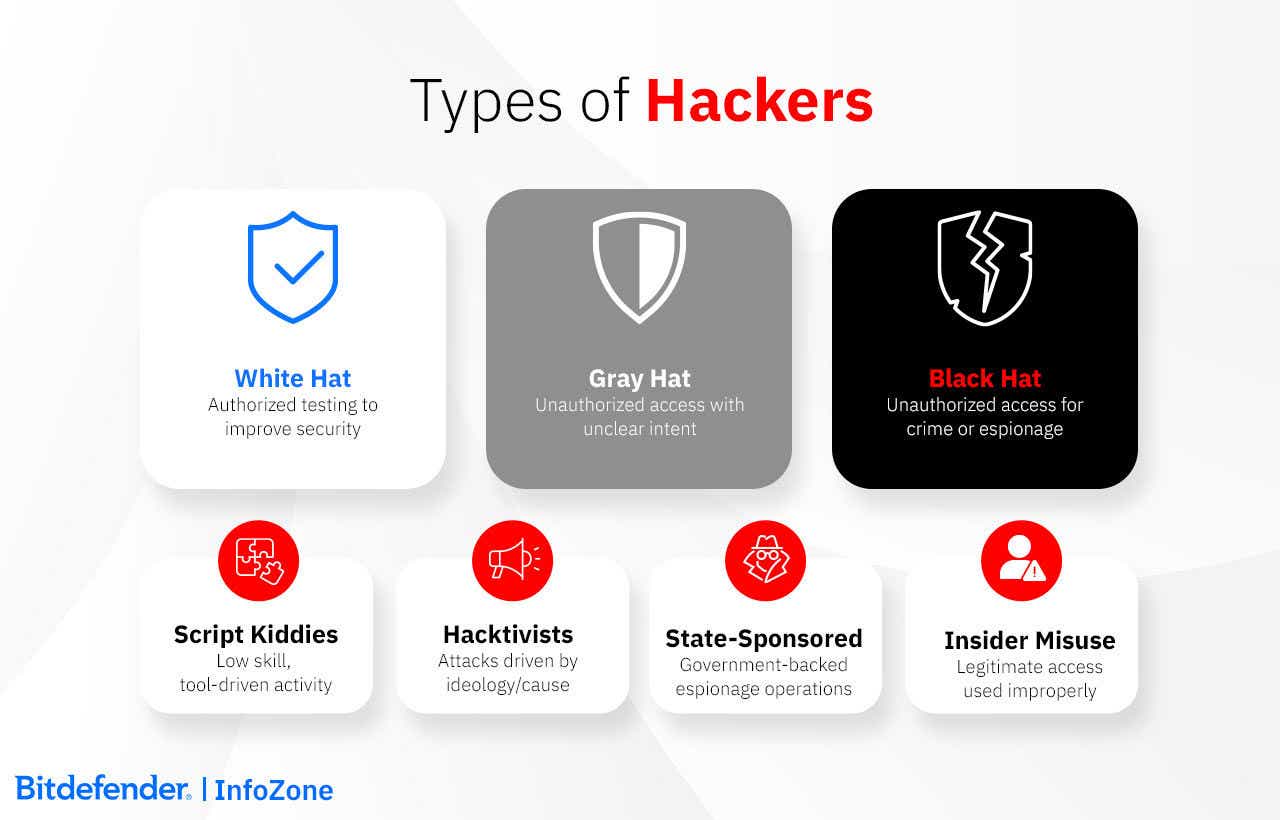

White hat hackers (also referred to as ethical hackers) are brought in by organizations on purpose. Their job is to try to break things safely so real attackers do not get there first. In effect, organizations are hiring someone to think and act like an attacker, but only within boundaries they set themselves. From the outside, the behavior can resemble a real intrusion. The difference is that the goal isn't access for its own sake, but learning what would happen if the wrong person tried the same thing.

Black hat hackers are the term used to make a distinction for those who break in without consent, including criminals who run ransomware operations, steal customer data, or stay inside networks for espionage or future use. Many of these attacks do not rely on clever new techniques. They succeed because someone reused a password, left a system exposed, or trusted an access path that no longer made sense. Once access exists, it is often reused and traded rather than rebuilt from scratch.

Between these two groups are gray hat hackers. They access systems without permission, but not necessarily to cause damage. An example is discovering a flaw, exploiting it to prove it exists, and then notifying the organization or disclosing it publicly. As noble as the scenario looks, there are perceived risks, not the risk of discovery itself, but the loss of control that follows. The problem is not intent, but control. Once a vulnerability is used without authorization, the organization no longer decides who knows about it, who can reproduce it, or how quickly it must be fixed. The affected organization has no way to limit how the information spreads or how the flaw is reused, and this makes this type of hacking as ambiguous as its name suggests.

There are other labels for hacking and hackers, but these describe specific situations and not ethics. Script kiddies is a derogatory term for “hackers” who are technically impaired, but have found a workaround through ready-made tools they use with little understanding of how they work. Hacktivists are differentiated from black hat hackers based on a perceived moral higher ground: they attack systems to promote political or social causes instead of financial gain. Insider threats are not hackers; they have legitimate insider access that they misuse. State-sponsored hackers are part of government-backed programs.

When people talk about “hacking tools,” they’re usually referring to very different things. Some tools are about visibility, figuring out what a system exposes to the outside world. That might mean scanning networks, checking what services are reachable, or pulling together public information about infrastructure. Nmap, Shodan, Censys, and OSINT-style techniques are commonly used for this.

Other tools come into play once access has been agreed on and testing begins. These tend to be used once testing is underway, mainly to check whether existing flaws can actually be triggered. Metasploit, Burp Suite, SQLMap, Kali Linux, and Wireshark are commonly seen here.

Then there are tools focused on credentials and access. These are used to test password strength or simulate how accounts might be abused if credentials are reused or poorly protected. John the Ripper, Hashcat, and social engineering toolkits usually sit in this bucket.

|

Purpose |

Examples |

|

Reconnaissance & Information Gathering |

Nmap, Shodan, Censys, OSINT frameworks, Google Dorking |

|

Vulnerability Scanning & Exploitation |

Metasploit Framework, Burp Suite, SQLMap, Kali Linux, Wireshark |

|

Credential & Access Abuse |

John the Ripper, Hashcat, social engineering toolkits |

The tools listed above are not malicious by default. Many are used daily by security teams to test systems, validate defenses, and investigate weaknesses. What matters is permission. Used without authorization, the same tools can cause real damage and legal consequences. The categories below show where tools fit into security work, not how an attack is carried out.

In most countries, hacking is illegal when it is done without permission. It does not matter much whether damage was intended or whether the person involved believed they were helping. Access that was not allowed is usually treated as a violation on its own.

In the United States, the Computer Fraud and Abuse Act focuses on accessing systems without authorization or using access in ways it was not meant to be used. Under laws like the EU’s GDPR, accessing data you were not allowed to handle can lead to serious penalties. Other regions apply comparable rules, which is why cross-border cases are often handled through international cooperation agreements, such as the Budapest Convention.

Curiosity or good intentions do not change this, according to the courts. Recent rulings continue to treat unapproved access first and foremost as exactly that - a crime.

Ethical hacking refers to activities like penetration testing or vulnerability research, and these are considered legal as long as permission exists beforehand, not after the fact. That permission is usually written down and limited in scope. If testing goes beyond what was agreed, legal protection can become void.

In the 1960s and 1970s, the word “hacking” didn’t have the negative connotations it has today, being used mostly in academia and in technical circles for a clever workaround, system tinkering, or simply for pushing hardware and software beyond their intended limits. Hacking was an exploratory activity based on curiosity rather than on the desire for profit or disruption.

In the 1980s, as computers became networked, small mistakes could scale quickly and overwhelm large parts of the early internet. The Morris Worm (1988) is to this day considered a pivotal moment in a perception shift. Hacking had until then been admired as ingenuity, but after this incident, response teams and coordinated defenses were created for containment.

Commercial use of the internet changed what failure looked like. The same weak password could now unlock email, billing, and internal systems. The same exposed service could be discovered again and again. Over time, incidents stopped looking unique. They started to resemble each other.

Defensive practices didn't appear all at once. They accumulated after the same problems kept resurfacing: unpatched systems, unnoticed access, and weak controls leading to predictable outcomes. Over time, patching, monitoring, and access controls became standard, not because they were novel, but because ignoring them kept producing the same failures.

Incidents in the 2010s began to look different from earlier break-ins. Instead of quick theft, many involved attackers remain inside environments, moving slowly and acting selectively. In the 2020s, automation and platform integration reduced the effort required to maintain that kind of access.

In the National Public Data breach, roughly 2.9 billion records were exposed. The incident was not tied to a new exploit. It stemmed from long-term data collection and retention practices, which resulted in the exposure of records accumulated over multiple years.

The Salt Typhoon intrusions affected multiple telecommunications networks. Access was present for extended periods and was not immediately followed by disruption or service outages. Activity involved continued access to internal systems within telecommunications infrastructure.

Other incidents during the same period involved a range of organizations, including Snowflake customers, Microsoft's Midnight Blizzard breach, and several telecommunications providers. In these cases, compromised accounts remained active for extended periods. Valid credentials continued to allow access, and security alerts were not consistently triggered across affected environments.

The Change Healthcare ransomware attack affected systems used by hospitals and pharmacies. Disruptions to those systems led to delays in prescriptions and other clinical services

Early hacking history includes a limited set of well-known cases and part of that record are Kevin Mitnick and Kevin Poulsen. In those cases, routine trust played a larger role than technical sophistication. Both ultimately faced prosecution, and both later reappeared on the defensive side of the industry, carrying firsthand knowledge of where systems and organizations tend to fail.

The Anonymous collective is famous for using hacking to protest and gain visibility rather than money, although it managed to do that with mixed results and is currently experiencing declining influence.

Over the years, security measures were usually added in response to events. Incidents, lawsuits, outages, and public attention preceded most changes. Controls tightened after failures became costly. Responsibility followed system growth, not the other way around.

Most attacks don’t start because someone "forgot" security. They usually begin with small things that teams overlook. Maybe an old integration that nobody has reviewed in a long time. Maybe a service left running after a project wrapped up. or permissions that kept growing as people moved around.

It is these easy openings that hackers will use; therefore, protection can begin with simple checks, but not stop there. Look at what your systems run on today and make sure those choices still hold up, something that professional teams check often. The downside to not doing this is that you find out only when something goes wrong. That is why routine checks and people who know the environment count for more than any single guideline.

The main things to focus on are reducing what is visible from the outside, improving what you can see on the inside, and knowing how the organization responds when something looks wrong. Small improvements in these three areas do more for protection than any new tool on its own.

Recent AI advances have made some tasks easy to run at scale, and attackers use that to their advantage. Phishing messages look more natural, and scanners can check for exposed systems with very little effort.

Defenders use AI as well, mainly to notice small changes in behavior that would get lost in day-to-day activity. A login at an unusual time, a process touching files it never interacts with - signals that are easy to miss without help.

The risk comes from how these tools are handled. Attackers try to blend their actions into normal traffic, and employees can leak internal data when they paste sensitive information into public AI systems.

Overall, AI changes the pace. It allows both sides to repeat routine actions far more often than before.

Most intrusions don't hinge on a single method and rather succeed because something is exposed or controls drift, while small issues are ignored. GravityZone approaches this as one problem, not several. It brings the preventive and investigative parts together so teams can spot conditions that hackers turn into access.

Prevention starts by shrinking what attackers can use. Risk Management and Patch Management expose the misconfigurations, old software, and day-to-day behavior that open the door. External Attack Surface Management adds the outside view, often catching systems people forgot they even had. This often includes systems or services that were set up for a reason at some point, then forgotten or assumed to be harmless. Once they are visible, they tend to be reused.

In environments where attackers avoid dropping malware, Proactive Hardening and Attack Surface Reduction (PHASR) focuses on how built-in tools are used. It limits access to utilities that are convenient for everyday administration, but also convenient for living-off-the-land activity, making those paths less reliable without breaking normal workflows.

During an intrusion, the hard part is not finding data, it's knowing what to pay attention to. Normal activity produces so much noise that suspicious behavior blends in. GravityZone EDR and XDR combine signals from across the environment so teams can trace how a breach started and what it touched. Network Attack Defense adds coverage for movement across the network and repeated login attempts that often look routine on their own.

For organizations that need continuous oversight, Bitdefender MDR provides round-the-clock monitoring and response. Offensive Security Services, including penetration testing and red teaming, offer a controlled way to test whether defenses hold up under real-world pressure.

In cybersecurity, there's rarely a clean signal that says something went wrong. Most of the time, it comes down to familiarity and basic hygiene, to noticing when systems just don't behave the way they usually do. That's why training and awareness matter more than waiting for a warning that's easy to spot. When access is being misused, the clues tend to be small. A password changes without you touching it. A login pops up from a place that doesn't match your day. Your device might feel off - settings shift, new apps show up, or it slows down for no clear reason. One sign doesn't say much, but a few together often do.

Antivirus software still plays a role, but it isn't the safety net people sometimes expect, especially considering that the tools themselves have become more advanced, using behavior and patterns rather than simple signatures. These do stop a lot of things, but they can't stop every intrusion because many intrusions work without malware. If an attacker logs in with a valid password or talks someone into letting them in, there's nothing for antivirus to catch. The same goes for the misuse of normal system tools. That's why simple practices (updates, healthy suspicion, and knowing what “normal” looks like) matter just as much as the software.

Many companies start by checking things themselves. Teams look at configs, account rights, and update status, but they stick to the access they already have. When they need a closer look, they bring in penetration testers. Those testers work only from what was agreed on in writing, which is what keeps everything on the right side of the law. Testing without clear approval, even when the intent is defensive, can quickly become a legal problem.