AI Is Turbocharging Scams Worldwide, INTERPOL Warns

A new INTERPOL report has raised an alarm over the rapid evolution of global financial fraud, warning that increasingly sophisticated scams — powered by artificial intelligence, cryptocurrencies and organized crime networks — are expanding in scale, reach and impact.

Key takeaways:

- AI is accelerating global fraud: Scammers are using AI, deepfakes and automation to scale attacks and make them more convincing than ever.

- Fraud is becoming industrialized: “Fraud-as-a-service” platforms and cheap digital tools are lowering the barrier to entry for cybercriminals.

- Deepfakes are a growing threat: Voice and video cloning can convincingly impersonate trusted individuals, enabling precise targeting of scams.

- Classic scams still dominate: Investment scams, romance scams, advance-fee fraud and BEC remain the most common—but have grown more sophisticated.

The INTERPOL Global Financial Fraud Threat Assessment 2026 highlights how fraud has become one of the most pervasive forms of transnational crime, affecting individuals, businesses and governments worldwide. Criminal groups are leveraging advanced technologies and service-based cybercrime models to launch highly convincing and scalable attacks, often at minimal cost and technical expertise.

“Enabled by artificial intelligence, low-cost digital tools and increased global criminal collaboration, we are witnessing the industrialization of fraud,” said INTERPOL Secretary General Valdecy Urquiza said. “It is vital to remember that the cost of financial crime is not just money – it is people’s life savings, their dignity, and in the worst case, their life.”

AI, crypto, and ‘fraud-as-a-service’

According to INTERPOL, artificial intelligence, large language models and deepfake technologies are helping criminals craft more personalized and harder-to-detect scams.

These tools are often combined with cryptocurrency-based payment systems and underground marketplaces offering phishing kits, ransomware tools and fraud infrastructure “as a service.”

This industrialization of fraud means attackers no longer need advanced technical skills to operate sophisticated campaigns.

The report also underscores the growing involvement of transnational organized crime groups, some of which use human trafficking to fuel large-scale scam operations.

Victims are coerced into working in illicit call centers, often carrying out so-called “pig-butchering” scams—a hybrid of romance and investment fraud typically involving cryptocurrency.

Most common fraud types persist

Despite technological advances, many fraud schemes remain rooted in familiar tactics. INTERPOL identifies the most prevalent global fraud types as:

- Investment scams

- Advance-payment fraud

- Romance scams

- Business email compromise (BEC)

However, these schemes are now more convincing and scalable due to automation, data harvesting and AI-driven personalization. The report draws attention to “Agentic AI” which can autonomously plan and execute fraud campaigns from start to finish.

Cloned voices and faces

INTERPOL warns that Dark Web marketplaces offer applications that can clone voices and faces using mere seconds of genuine audio or video samples, enabling criminals to impersonate celebrities or associates of intended victims.

Examples abound. In 2024, a family from San Francisco suffered a horrific ordeal after scammers used voice-cloning technology to try and persuade parents to pay for their son’s bail.

Read: ‘Mom, I Crashed the Car!’: Scammers Clone Son’s Voice to Ask Parents for $15,000 Bailout

In a similar case, a Florida woman was conned out of $15,000 after scammers cloned her daughter’s voice to fake a desperate call for help after a fabricated car crash. The case drew national attention, spotlighting the growing danger of AI-powered voice scams.

Read: Florida Woman Loses $15K to AI Voice Scam Mimicking Daughter in Distress

A borderless threat

INTERPOL warns that financial fraud thrives on anonymity, cross-border operations and rapid adaptability, making it difficult for authorities to track and disrupt networks.

The report mentions a global surge in AI-enhanced fraud schemes, notably sextortion and investment scams, as well as impersonation frauds, including fake kidnappings for ransom.

Recent global operations illustrate both the scale of the problem and the need for coordinated action. In one major crackdown spanning 72 countries, authorities dismantled tens of thousands of malicious systems linked to phishing and ransomware campaigns, highlighting the industrial scale of cyber-enabled fraud.

The international police organization stresses that tackling financial fraud requires closer collaboration between governments, law enforcement and the private sector, as well as improved intelligence sharing across borders.

Advice for consumers

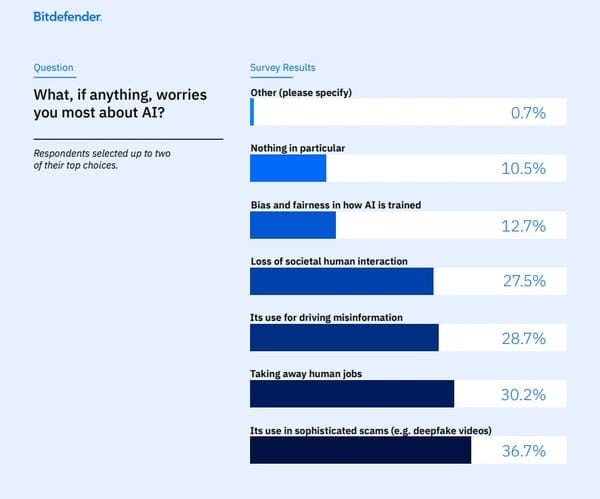

In the 2025 Bitdefender Consumer Cybersecurity Survey, consumers in seven countries stated loud and clear that they are concerned about AI being weaponized to commit fraud and deception.

While AI promises incredible advances, 37% of respondents said the use of AI in sophisticated scams (e.g., deepfake videos) was their top concern — ranking above job loss and misinformation.

As scams and fraud grow more sophisticated and harder to detect, we strongly advise consumers to adopt a cautious and proactive approach to digital interactions:

- Be wary of urgent or emotionally charged messages, especially if they involve money. Scammers frequently impersonate victims’ family members or colleagues, or authorities, using AI-generated voices or deepfakes to create panic and pressure quick decisions.

- Verify requests through a second channel. If you receive a call or message claiming to be from a loved one or institution, hang up and contact the person or organization directly using a trusted number.

- Limit what you share online. Publicly available photos, videos and voice clips can be used to train AI tools for impersonation scams.

- Use strong, unique passwords and enable multi-factor authentication (MFA) on financial and email accounts to reduce the risk of unauthorized access.

- Be cautious with investment opportunities that promise high or guaranteed returns, particularly those involving cryptocurrencies. These remain among the most common and costly fraud schemes globally.

- Finally, consider using reputable security software and staying informed about emerging scam tactics.

- When in doubt about an unsolicited phone call, text or social media interaction, use Scamio, our free scam detector and prevention service. Simply describe your situation and let Scamio guide you to safety.

You may also want to read:

Meta launches new anti-scam tools on WhatsApp, Facebook and Messenger

FAN Courier SMS Scam Targets 1 Million Romanians in Massive WhatsApp Takeover Campaign

War as a Hook: How Fraudsters Are Using the Israel-Iran Crisis to Target Netizens

tags

Author

Filip has 17 years of experience in technology journalism. In recent years, he has focused on cybersecurity in his role as a Security Analyst at Bitdefender.

View all postsYou might also like

Bookmarks